It’s very likely that during your marketing team meetings, you spend way too much time discussing whether you should use an emoji in your subject line or include a colorful CTA button to attract your subscribers.

What if I told you that there is a data-driven method to experiment with your email testing ideas without wasting your time and eliminating guesstimation from your workflow?

Let’s get to it!

Yes, using elements of humor may work on the subject lines better. A picture of a smiling person in your hero section might be a good trigger for clicks, or a video in your email body could help with conversions. Some of your nerdy team members could even go further and show you some research to prove their point.

Although it is great to have creative ideas for your email campaigns, they are all based on intuition or gut feeling.

Moreover, you may not know if those ideas are a good fit for your audience until you fully execute them.

Why marketing experiments are essential

To find out what really works for your email marketing goals, audience, and customers, you need to consider new campaign ideas and test different variations. The best way to stay innovative and relevant with your audience is to conduct experiments to calibrate your email marketing program.

In today’s data-driven era, you no longer need to depend on guesstimation where you “believe” a concept or a theory. You can prove the value of your marketing experiments, and it can lead you to make critical business decisions and set growth strategies accordingly.

If you don’t have a well-defined marketing experiment and testing methodology, you will be shooting in the dark with your actions. In a way, you’d be gambling with your time and resources.

Successful marketing teams can back their decisions with analytics, prove the effectiveness of their campaigns, and know very well what’s profitable (and what’s a no-go!). Once you create a data-driven marketing experiment and testing model, you take the first step to optimize your strategies and improve your marketing performance.

Email veterans would know it very well: sometimes, market conditions or regulations may force marketers to push for innovation. Remember GDPR, CCPA, or even the recent Apple Mail Privacy Protection updates? They have all been involuntary innovation triggers for email marketers. Adopting an experimentation mindset (voluntarily) will bring you on the path to innovation and help you stay profitable in today’s very competitive business environment.

“Test it before you send it”

As email marketers, we always say: test it before you send it. The #emailmarketing hashtag on Twitter and Linkedin is full of stories where marketers sent a newsletter with several mistakes in it. Sometimes it remains a funny memory, and brands manage to recover with a follow-up email.

Well, there are also cases of premature sending without eliminating email mistakes, and nobody wants to remember them. It is also crucial to test your email messages to make sure that they are free from:

- grammar mistakes,

- typos,

- broken links,

- or any other structural issues.

Email marketing undergoes a constant transformation. Consumers are increasingly demanding a valuable inbox experience. Moreover, their expectations change rapidly, and marketers must adapt to their customers’ expectations and keep their engagement high. It is fair to say that the most fundamental reason for marketers to test their email campaigns is to fine-tune for their audience.

As many as 55% of marketers rarely or never test their email campaigns. If you’re reading this article, congratulations, you’re on the winning team!

In the rest of the article, we will cover:

- the importance of email testing,

- which email testing methods to use,

- and how to find the best combination of message components for your next email campaign.

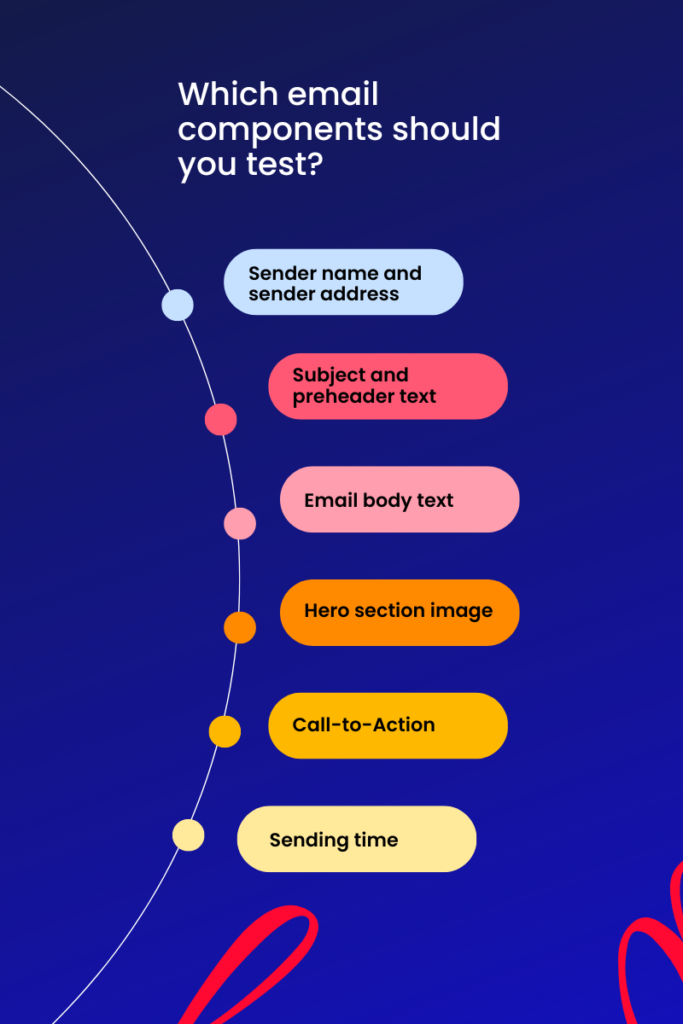

Which email components should you test?

The exciting fact about email testing is that there are so many email components (or scientifically, “factors”) to test, more than just subject lines or CTAs. Email marketers can easily experiment with different versions of email components and measure how they perform.

Obviously, you cannot test everything because sometimes it wouldn’t make sense or you simply need to put your efforts into generating the highest impact on your revenues.

Here are some testing ideas for various email components:

Sender name and sender address

When a subscriber receives an email, one of the first things to look at is the sender’s name. Imagine how many email messages an average person receives every day.

You can test the “from name” using your company/brand name or replacing it with one of your team member’s name. A “humanized” sender name may trigger more attention from your subscribers.

Subject and preheader text

Did you know that 69% of email subscribers report email messages as spam solely based on the subject line? Probably, due to this reason alone, subject line testing is the most popular email component in the industry.

According to a Litmus, businesses primarily test the subject line of an email – as many as 78% of marketers that run A/B tests, check the email subject line. Testing preheader text is becoming increasingly important since customers expect to see a compelling continuation of email subject lines.

Email body text

It’s all about capturing the hearts and minds of your audience. However, some might expect an informal and friendly text, while others might be interested in a formal and clear message.

You can develop different variations of your email body text and use email testing to deliver an appealing tone your subscribers would like to read.

Hero section image

Subscribers LOVE consuming visual content. Depending on your brand’s audience, you can test many email hero section image variations. Maybe your audience would respond better to lifestyle images than product images?

Consider testing larger vs. smaller product images, color combinations, and positions. Sky’s the limit when it comes to finding out the aesthetics of your subscribers. In recent years, brands occasionally share user-generated images to attract subscribers.

Call-to-Action

Having CTAs in your email can significantly boost your conversion and bring you the best results. Brands often test CTA buttons vs. links or design elements like button size, style, text, etc.

Moreover, the placement of a CTA button in an email message might be a game-changer based on the clicking patterns of your subscribers.

Sending time

Email subscribers engage with your campaigns at their own pace. Brands experiment with different sending times for certain segments to address specific campaigns at certain events, such as Holiday Season campaigns.

Almost all marketing automation systems offer Send Time Optimization (STO) features today. However, brands may have exceptions. For instance, if you have a store, you may not want to send emails when your locations are closed.

Another example can be regarding call centers. Limit your email sending time with your call centers’ operation hours and capacity. Otherwise, you may overload your team, and your customers will not be happy waiting on the line.

Similarly, for ecommerce & retail companies, using STO for a flash sale campaign is nonsense since the promotion is intended to be within a three-hour window. Nobody wants to get a flash sale message after the sale event is over.

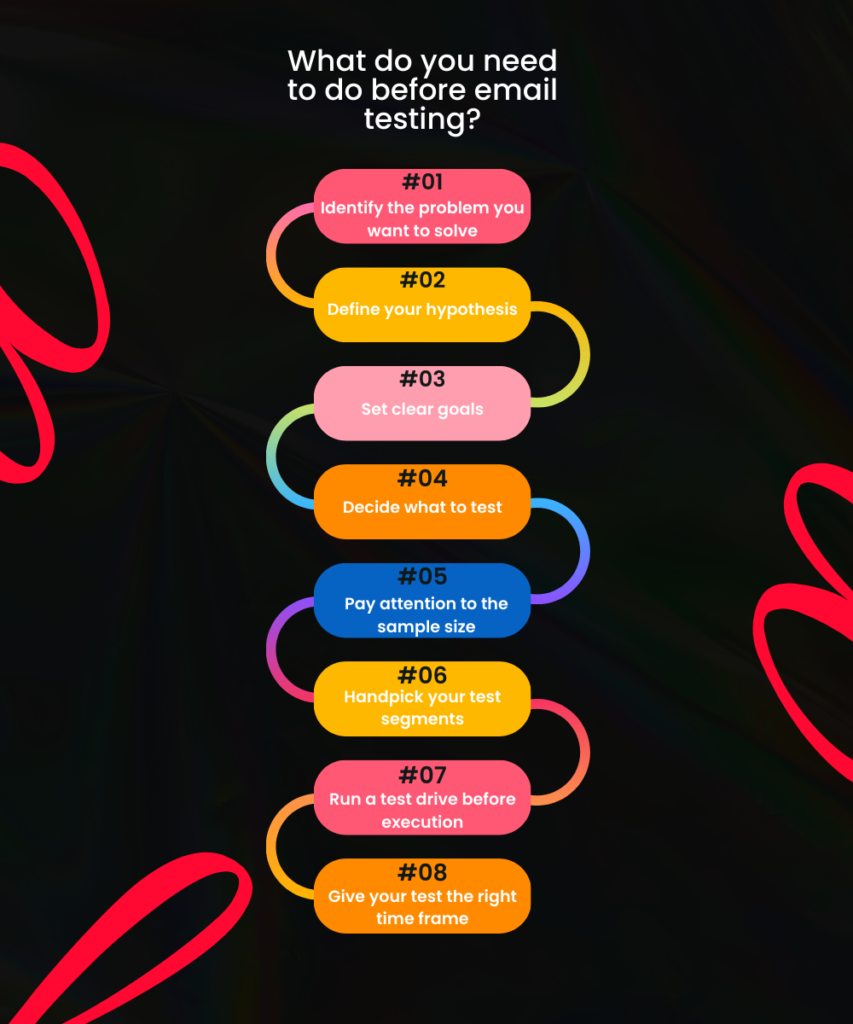

What do you need to do before email testing?

1. Identify the problem you want to solve

Email testing requires good planning and resources. If you’re up to starting a test, you may want to have a good reason. Otherwise, there are better things to do in life.

What brings you to this stage is the need to answer a question or solve a significant problem. You must identify the problem and define it very clearly.

The next step is validating this problem with qualitative data. For instance, you observe high open rates with your email campaigns, but your CTR is very low. In this case, your email content doesn’t convert, which makes the problem you’re looking to solve.

2. Define your hypothesis

Once you determine the pitfalls and areas of improvement for your email campaigns, you can think of ways to solve these problems. To do this, you want to have a strategic hypothesis about why a particular variation might bring you better results than others.

A basic structure for your email testing hypotheses may look like this: “if I change this, it will have this effect.” For instance, “changing my email template from only-text to a good -looking design will increase CTR because subscribers find a good blend of text and images more attractive.”

From our professional experience, we notice that many email marketers are too quick to start email testing, and don’t use hypotheses. We strongly recommend defining your hypotheses since it allows you to build on previous testing results and use them for future improvements.

3. Set clear goals

You want to clearly understand what victory means for you. Are you doing email testing to affect email revenues directly ? Is your final goal to increase the number of clicks or get more conversions or sales?

For instance, if you’re running a Black Friday campaign, getting a 10-20% uplift in your CTR would be a win!

On the other hand, you should learn more about your audience and see how they react to the changes you apply.

Usually, if you’re after getting to know your subscribers better, the results of these tests may not be drastic.

4. Decide what to test

At this stage, you can choose which email components you want to test. It’s crucial to have your test factors in line with your hypotheses.

If you go with A/B testing, you want to test a single variable at once to get accurate and actionable insights.

If you have enough email volume, you can test more than two variables at the same time against each other but with one condition: the variables you test must align with your hypotheses.

5. Pay attention to the sample size

Before you run your experiment, you should ensure that you have the appropriate sample size for your email test. The ultimate goal of your experiment is to have reliable results so you can tell which email tactic to deploy.

In other words, you need a large enough sample size to reach statistical significance so that your results are not due to chance. For instance, a 95% confidence level for a test means that if you rerun the same test, you are 95% confident that it will yield the same results.

If your test results don’t have a high statistical significance, your email marketing budget and subsequent campaigns are at risk.

Once your findings are statistically significant, you can have confidence that your results didn’t appear randomly. You can manually calculate your sample size before you run your test.

However, most marketing automation systems provide detailed information during the setup of an email test.

6. Handpick your test segments

Good! You make your college professor proud and calculate the sample size for your experiment. You need to consider two more important things when selecting your test audience segments.

First, to compare your different test versions fairly, you should have similar segments composed of subscribers who share common attributes. For instance, if your version A has a segment with new subscribers, pick the segment for version B from new subscribers as well.

Second, you should build those segments for different test versions from active subscribers. You can even match subscribers with similar or the same activity level. Imagine sending version A to an active segment, and version B to an inactive one. We can easily say that version A would be the winner, but your experiment would fail.

7. Run a test drive before execution

Before you click the button and start your email testing, it is recommended to have a quality assurance step.

It involves running a test drive with a small number of subscribers to walk through the process and ensure your setup is done correctly. Don’t forget to involve yourself in the sample.

8. Give your test the right time frame

We often receive the question from brands: “How long should I wait for this experiment?”.

The time frame may change, but the answer is always the same: You must wait until your email test is statistically significant. It mainly depends on the test audience size and how soon they engage with your test emails.

You don’t want to end your test prematurely before seeing the results, which you will analyze later.

What are the email testing methods out there?

Modern email marketers are far away from decisions based on guesswork, but they have a scientific approach and rely on data.

In a data-driven marketing team, the best way to eliminate uncertainty is to embrace email testing methodologies based on their email marketing strategy.

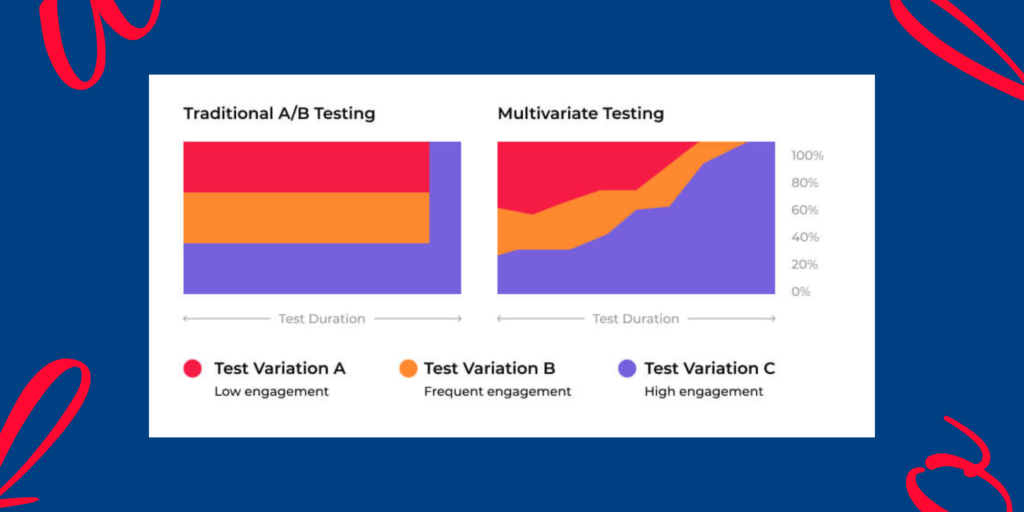

The most common email testing methods are the good old A/B Testing and AI-powered Multivariate Testing (MVT). Let’s have a closer look at them.

A/B testing

A/B Testing – also called split testing – is the most popular email testing method, which compares the performance of two different versions of an email component (subject line, images, CTA, send time, etc.) to determine which version generates better results.

Based on statistical analysis, A/B testing allows marketers to see which version works better for the target audience. A/B Testing is not just used to evaluate email marketing performance.

The goal of A/B Testing is to reach email subscribers more effectively by optimizing email campaigns and delivering a better customer experience.

If applied correctly, email marketers can see higher open and click rates, improved subscriber engagement, and ultimately better ROI from email marketing efforts.

Researches show, that brands don’t only apply A/B testing to their email activities. They also A/B test their websites, landing pages, or paid search ads.

Which is a good thing, because testing across multiple marketing channels can help marketing teams to collect those results, and analyze them to execute combined strategies.

For instance, if using a subject line spiced up with a little curiosity tone increases your open rates, you can implement the same copywriting tactic for your social media ads.

Multivariate Testing (MVT)

Although more than half of successful digital marketers engage in Multivariate Testing, there is still some confusion over what Multivariate Testing (MVT) is, how it works, and what makes it different from A/B testing.

Multivariate Testing is an email testing method that allows marketers to test different combinations of variables at once. The “Multi” in Multivariate Testing speaks for the term itself.

Email components such as sender name, subject line, header, image, CTA, etc., can all be tested concurrently on a selected group of subscribers to determine which combination yields the highest results depending on the email metric used for performance assessment.

MVT provides more insights to learn more about winning combinations instead of drilling down on a specific email component.

The goal of Multivariate Testing is to:

- improve subscriber engagement,

- conversion from your emails,

- and ultimately, increase revenues through your email marketing campaigns.

Even the smallest changes can make a huge impact on your results. MVT helps you automatically measure the impact of each email component you test.

How does Multivariate Testing work?

Running an experiment with Multivariate Testing is very similar to A/B Testing. Again, we recommend the framework instructions above describing what to do before starting an email test. The main difference in running those two primary email testing methods is the number of variables you include.

Thanks to the advanced marketing automation solutions today, the whole testing process is done by your system. Usually, the MVT setup is straightforward. Most solutions offer up to 4 email components to perform MVT.

When configuring the test, you must select which email components you want to test. At this step, you may want to have the variations for each email component available.

Once you create your test combinations, you must set your goals and audience size for this experiment.

Some marketing automation solutions offer testing for a specific time, testing up to a certain number of subscribers, or having your test continuously. You may decide on the one that suits you best for testing purposes. After these final settings, you can begin the test!

It’s very convenient for marketers since the system automatically determines the winning combination and deploys it to the rest of your audience. This hassle-free execution model eliminates the manual work and, obviously, the human error factor.

Where does AI step in for Multivariate Testing?

We interact with Artificial Intelligence countless times every day without even noticing it. AI technologies make our daily lives easier as consumers and marketers. The benefits of AI in marketing are numerous, and AI integration in MVT is the next big revolution.

AI integration allows email systems to analyze the subscriber feedback for each test combination of the MVT process. The AI solution presents the winning combination based on your test goals, and the most possible optimized combination can be deployed to the rest of your subscribers.

In traditional A/B Testing practices, the winning version runs until the statistical significance is reached and immediately deploys the winning version to the rest of your subscriber list.

On the other hand, AI-Powered Multivariate Testing uses machine learning algorithms with a multi-armed bandit (or dynamic traffic allocation), automatically and gradually deploying the winning combination to your subscribers.

This way, the most optimized message combination starts reaching your subscribers for better engagement which translates into more clicks and revenues for your email marketing program.

What do you need before executing Multivariate Testing?

First of all, you need the right technology to perform Multivariate Testing. Without using the right technology capable of creating the testing workflow, execution and complex analysis, Multivariate Testing is virtually impossible.

Once you have the technology in place, you need to know which problem or challenge you’re trying to solve and what insights you want to gain from your test.

A common way to approach your Multivariate Testing is to ask yourself some preliminary questions. It will help you mentally prepare for the planning phase of your Multivariate Testing.

- Do our subscribers prefer minimalistic emails or long descriptive templates?

- If we use a question in the subject line, does it help us get more opens?

- Does using an authentic personal name as a sender name get more opens than our brand name?

- Do our subscribers find images or CTA buttons more attractive to click?

You can go ahead with those questions, but the main focus here is to adjust your test initiative toward rapidly changing customer expectations in email marketing.

Although Multivariate Testing allows marketers to test as many email components as they want, there is a catch: you may want to prioritize the ideas most likely to get you the best results with the least effort.

The ICE score method by Sean Ellis can help you prioritize your Multivariate Testing goals.

The ICE score has three parts:

- Impact: How big of an impact do you think this might have? For instance, is testing a slight change in your subject line likely to have as big of an impact as testing the tone of your copy?

- Confidence: How confident are you that this change positively impacts you? Testing proven tactics like personalizing the subject line is more likely to have a positive impact on conversions than changing the image style in your campaigns.

- Ease: How easy is it to implement this test? For example, testing the word order of your subject line would take less than 30 seconds, whereas testing different image styles requires you to create multiple images with different styling and will generally take longer.

Do yourself a favor and consider the three elements of the ICE score to help you grade each idea and prioritize which ones you should execute first.

Tip: You can apply the same email testing framework described above from hypothesis creation to selecting the correct sample size.

What are the Pros and Cons of MVT?

| Pros | Cons |

| Allows marketers to test different combinations of email components in a single deployment, equivalent to performing several A/B tests. | Slightly more advanced to implement compared to A/B Testing. |

| Provides marketers the insights to understand how different email components interact with each other. MVT doesn’t only let you know that one version is better than another, but it identifies which variables work together and how. | Requires a significant volume to be statistically significant. If your subscribers’ list is too small to test all the email components you have in mind, you can start by testing fewer variations to eliminate the volume problem. |

| Deploys the winning combination automatically and gradually which allows you to get the most possible clicks and revenues from your test. | Needs the right resources in place since your team may need to create more variations of email components. |

| Encourages marketers to be more creative since they can test many email components. | Analyzing the MVT test results might be more complex than traditional A/B Testing. |

Over to you

Among many other AI-powered email marketing options, Multivariate Testing is fun to use, and it can undoubtedly bring you more insights about your email subscribers and, eventually, more revenues.

Multivariate Testing is becoming increasingly accessible for modern email marketers due to the rapid developments in the processing power and use of machine learning algorithms.

We recommend experimenting with your email marketing campaigns as an ongoing process to stay “in the know” regarding your subscribers’ email preferences. It will allow you to increase conversions while you satisfy their aesthetic desires and content expectations.

In short, Multivariate Testing is not a one-off process for your Black Friday campaigns but a vital part of your entire email marketing program.

What are your experiences in running emails campaigns and split testing? Drop us a comment under this article!

And check out other articles on Cayenne Flow’s blog.

Editor’s note: Faruk Aydin is a seasoned and successful email marketer and a good friend of Cayenne Flow. He agreed to share the article he originally published on Inbox Suite’s blog. Here it is, after a round of editing and making it up-to-date.